AI and Trust ⊗ This Is Your Brain on Books ⊗ Should Synthetic Biology Be the Next Decade’s Big Bet to Tackle Climate Change?

No.291 — Crimes against search ⊗ Why ‘climate havens’ might be closer to home than you’d think ⊗ Exploring alternative futures in the anthropocene ⊗ The Wizard of AI

AI and trust

Bruce Schneier with an intriguing angle on AI and how to regulate it: trust is essential to society, humans are trusting by nature, AI is poised to break that trust. There are two types of trust, interpersonal and social, the latter scales better but is more prone to bias and prejudice. Confusion between these two types of trust will increase with the rise of Artificial Intelligence as people may start to think of AIs as friends when they are actually just services. The corporations controlling AI systems may take advantage of this confusion, leading to potential untrustworthiness.

A lot of the social trust around us is created by governments through legislation and consequences. Therefore, in Schneier’s argument, it is the role of government to create an environment for trustworthy AI through regulation of the organizations that control and use AI. This is where the important twist comes in; in such a framing, companies would be responsible for their AIs and regulated through how they build and use them, instead of trying to concentrate on what the AI does and curtailing it at the end of the line.

Schneier then proposes some solutions around fiduciaries and the creation of public AI models, “systems built by academia, or non-profit groups, or government itself, that can be owned and run by individuals.”

We can connect this to a few things. First, the whole vibe and tone around Google’s Gemini demo this week, where the presenter sounds like he’s speaking to a toddler or a pet. Clearly skewed towards a trusting relationship more than like using an app. The corporation also basically exaggerated (faked) about exactly what Gemini can do.

We can also see the social trust created by governments as a form of infrastructure, social infrastructure, and squinting a bit, perhaps as a commons. Alex Tabarrok has a short piece on India’s experimentations with digital public goods which are adjacent. And finally, there’s this piece by Margaret Mitchell proposing a rights-based approach to AI development, which, like Schneier with trust, advocates for regulation of AI at the source, how companies develop it and how they need to be transparent in what they create.

The natural language interface is critical here. We are primed to think of others who speak our language as people. And we sometimes have trouble thinking of others who speak a different language that way. We make that category error with obvious non-people, like cartoon characters. We will naturally have a “theory of mind” about any AI we talk with. […]

And you will want to trust it. It will use your mannerisms and cultural references. It will have a convincing voice, a confident tone, and an authoritative manner. Its personality will be optimized to exactly what you like and respond to. […]

All of this is a long-winded way of saying that we need trustworthy AI. AI whose behavior, limitations, and training are understood. AI whose biases are understood, and corrected for. AI whose goals are understood. That won’t secretly betray your trust to someone else. […]

We can never make AI into our friends. But we can make them into trustworthy services—agents and not double agents. But only if government mandates it. We can put limits on surveillance capitalism. But only if government mandates it.

This is your brain on books

This article by Elyse Graham at Public Books weaves two books together. Christopher de Hamel’s The Manuscripts Club and Adrian Johns’ The Science of Reading. The first “is a group biography, reaching back to the Middle Ages and forward to the 20th century, of the old and affable brotherhood (and sisterhood) of manuscript lovers,” while the second seeks to explain how we read. I won’t summarise further, the fun is in reading the quirky details Graham found in the two books, have a read.

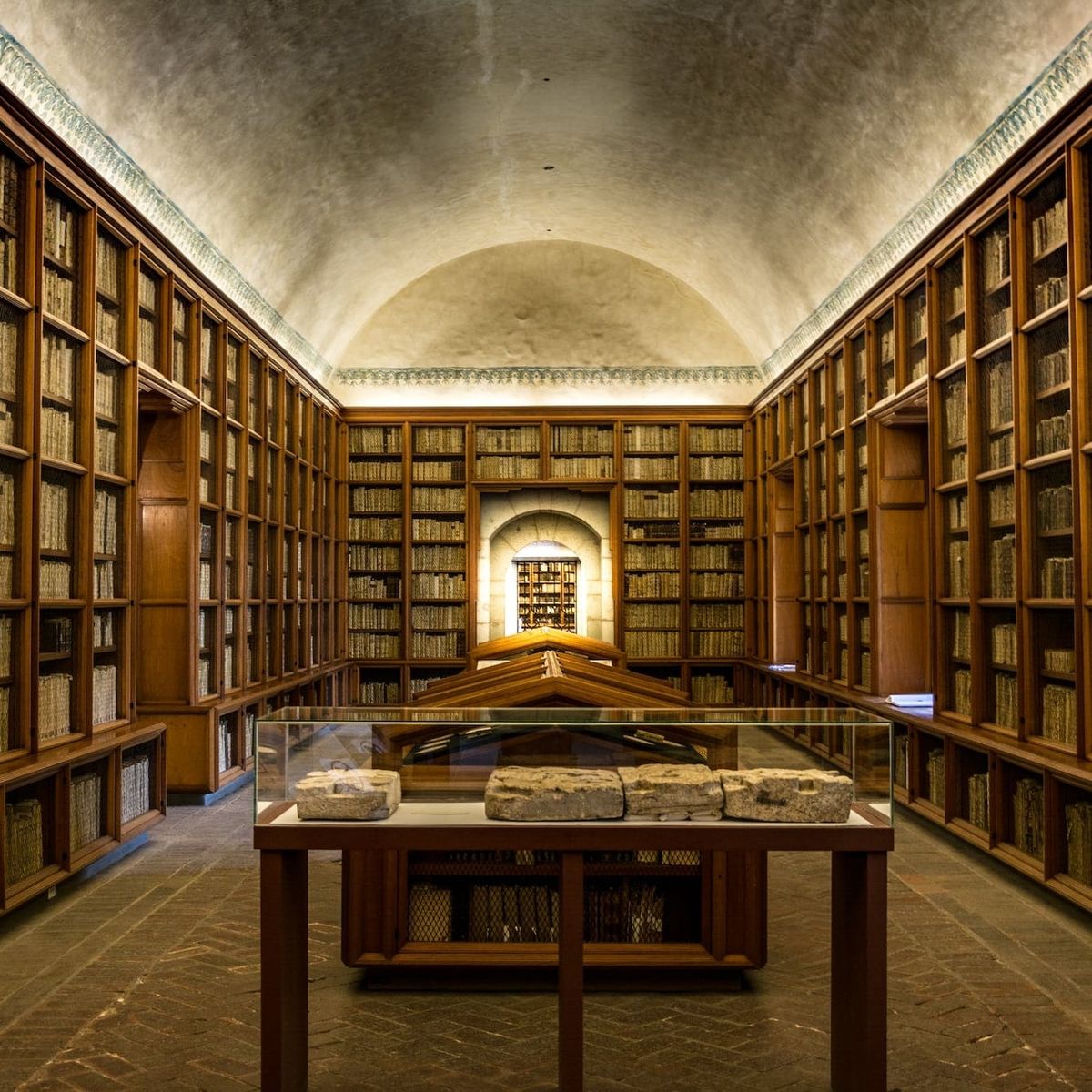

On books still, I almost featured this one instead. If the two books above pair well in the piece, the article itself pairs equally well with Benjamin Breen’s The open-stack library: a futuristic technology from the 18th century which dives into the transformation of libraries from chained luxury things to open-stacked third places that functioned as “as community centers, offering cards, games, and even dancing ‘after the blinds had been drawn to cover the bookshelves.’” Breen also writes about the organising principles of these libraries and shows hints of how they lead to current digital tools for finding knowledge.

Johns seeks to explain how we read; de Hamel seeks to explain why. Johns focuses on the part of reading that happens between the eyes and the brain; de Hamel, the part of reading that happens between the world and the reader. Johns emphasizes solitude and science; de Hamel, community and, metaphorically, magic. […]

“Reading is a good thing, we like to believe that it is a fundamental element of any modern, enlightened, and free society. We may even think of it as the fundamental element. It has long been standard to identify the emergence of contemporary virtues like democracy, secularism, science, and tolerance with the spread of literacy that occurred in the wake of Johannes Gutenberg’s invention of printing in the fifteenth century.” […]

[From the second piece.] The open stack library is like an early modern index that has been turned into a building. It’s organized but eclectic, it rewards browsing, and it allows you to pursue individualized interests within a “social” framework (everyone has the same index and the same library shelves, as opposed to personalized algorithmic recommendations which put us in silos).

Should synthetic biology be the next decade’s big bet to tackle climate change?

I was not aware of this format at Nesta, “Tech in the Dock puts an emerging technology on trial to examine its potential impact on society.” In this case they present synthetic biology, with a number of examples, and the potential pros like reducing emissions, climate repair, bioremediation, and adaptation. Followed by some of the potential cons like unknown environmental impacts, questionnable ethics, complexity of global coordination, a lack of readiness, wondering who foots the bill and reaps the reward, and finally what does a big bet might look like.

Can’t say the format brought anything particularly more interesting than a regular article, but it’s well linked, so a good diving-in point to learn more.

Although there’s been lots of progress, most experts agree that at current rates the technology is unlikely to be sufficiently developed, commercialised and scaled quickly enough to play a meaningful role in the time left to keep the planet under two degrees of global warming. So we could be timed out on relying on synthetic biology for mitigating climate change before it happens. […]

Helping to guide application of the technology to address climate change: an implicit or explicit mission-focused approach to synthetic biology for the climate would use levers such as making innovation funding more targeted to applications and outcomes, greater public investment in larger-scale infrastructure or strategic use of governments’ buying power to support synthetic-biology-derived green products or services.

§ Crimes against search. “In flooding the internet with bogus content aimed at nabbing a quick dollar, Ward was ‘shitting in the pool’ of the ‘information commons.’ The fury pointed at a collective effort to maintain and feed an information commons we can all use meaningfully, that is constantly being undermined by greed.”

§ Why ‘climate havens’ might be closer to home than you’d think. “Climate havens may not be something nature hands us, but something we have to build ourselves. And finding refuge doesn’t necessarily entail moving across the country; given the right preparations, it could be closer to home than you think.”

Futures, fictions & fabulations

Exploring alternative futures in the anthropocene

“Many challenges posed by the current Anthropocene epoch require fundamental transformations to humanity's relationships with the rest of the planet. Achieving such transformations requires that humanity improve its understanding of the current situation and enhance its ability to imagine pathways toward alternative, preferable futures.”

Designing a youth-centred journey to the future

A new playbook by the UNICEF Office of Global Insight & Policy and a worthy mission. “This youth foresight playbook aims to facilitate a more inclusive approach by offering ways to engage youth as co-authors and co-owners in foresight and decision making.”

An interview with futures leader Anab Jain

Julia Kloiber interviewing Anab Jain for Ding Magazine. “We learned more about Superflux’s current projects, methodologies and approach as well as some of the most important aspects of the studio’s work.”

Algorithms, Automation, Augmentation

The Wizard of AI

“Alan Warburton was commissioned by the ODI's Data as Culture programme to bring us 'The Wizard of AI,' a 20-minute video essay about the cultural impacts of generative AI. It was produced over three weeks at the end of October 2023, one year after the release of the infamous Midjourney v4, which the artist treats as "gamechanger" for visual cultures and creative economies. According to the artist, the video itself is "99% AI" and was produced using generative AI tools like Midjourney, Stable Diffusion, Runway and Pika.”

Unauthorized “David Attenborough” AI clone narrates developer’s life, goes viral

Another one for the “wow, but” file. “Developer Charlie Holtz combined GPT-4 Vision and ElevenLabs voice cloning technology to create an unauthorized AI version of the famous naturalist David Attenborough narrating Holtz’s every move on camera.”

Asides

- 😍 ✍🏼 🎅🏼 ‘I thought about that a lot’ is a collection of 24 essays by 24 authors. “Each author describes one thing they have thought about a lot in 2023. A new essay will be published each day in the countdown to Christmas.”

- 💨 ⚡️ 🤔 Weird but intriguing. AirLoom Energy: Utility-Scale Wind Energy at Extremely Low Cost. “Airloom harnesses the power of the wind to propel wings along a lightweight track. Our unique geometry generates the same amount of electricity as conventional turbines at a fraction of the cost.” (Via Anthropocene Magazine.)

- 🤩 🧱 🇮🇳 A Threshold disguises community centre in India “as ancient ruins”. “Appropriately called Subterranean Ruins, the building is dug into a steeply sloping site overgrown with mango, banana and coconut trees and designed as a freely accessible, multifunctional centre for the village of Kaggalipura.”

- 🗺️ ⛽️ 📊 Climate TRACE. “We make meaningful climate action faster and easier by mobilizing the global tech community to track greenhouse gas (GHG) emissions with unprecedented detail and speed and provide this data freely to the public” (Via Future Crunch.)

- 🧬 💩 Genetic testing firm 23andMe admits hackers accessed DNA data of 7m users. “The company confirmed to TechCrunch on Saturday that because of an opt-in feature that allows DNA-related relatives to contact each other, the true number of people exposed was 6.9 million – or just less than half of 23andMe’s 14 million reported customers.”

- 💪🏼 🌳 🇮🇩 Planting Mangrove Forests Is Paying Off in Indonesia. “Paying local residents to plant mangroves has raised incomes, increased fishery output, protected coastal areas, and contributed to efforts to mitigate climate change.” (Also via Future Crunch.)